AI Model

Deployment

Solutions

Scale your AI models seamlessly. Reliable, efficient deployment platforms and tools for production-ready inference.

Scalable AI Deployment for 2026

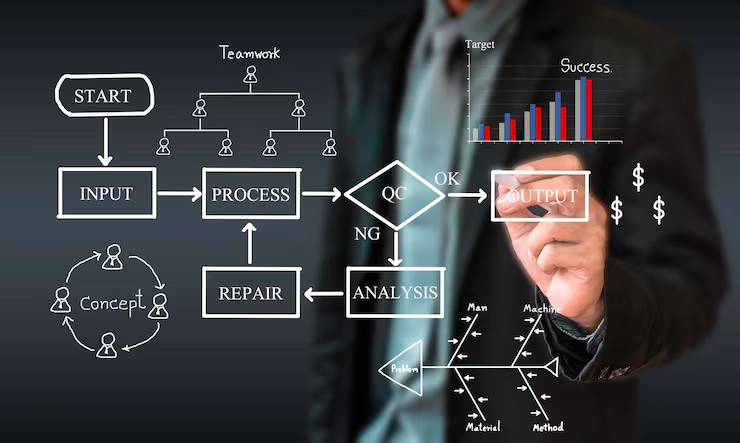

Why Focus on Model Deployment?

Efficient deployment ensures reliability, scalability and low-latency inference without vendor lock-in.

- Instant Scaling

- Secure & Compliant

- Cloud-Agnostic

- Cost Optimization

Top AI Model Deployment Platforms

End-to-end ML with auto-scaling

⭐ Enterprise Leader

Unified MLOps with AutoML

⭐ AI-Optimized

Full lifecycle management

⭐ Hybrid Cloud

Easy model hosting & inference

⭐ Community Favorite

Kubernetes-based AI hosting

⭐ Developer-Friendly

Fast deployment for ML teams

⭐ MLOps Focused

Quick apps for ML models

⭐ Free Tier

Static sites to full ML services

⭐ Versatile

Essential Deployment Tools

Docker

Containerization for consistent environments

Kubernetes

Orchestration for scaling deployments

TensorFlow Serving

High-performance model serving

TorchServe

PyTorch model deployment framework

BentoML

Build and deploy ML services

Kubeflow

End-to-end ML workflows on Kubernetes

Deployment Best Practices

Containerize Models

Ensures portability across environments for consistent deployments.

Implement CI/CD

Automates testing and updates to minimize downtime.

Monitor Performance

Detects issues in real-time to maintain 99.9% uptime.

Optimize for Cost

Leverage auto-scaling to meet demand while reducing costs.

Ensure Security

Protect sensitive data with encryption and strict access controls.

Version Control Everything

Track changes to code, configs and models for rollback and reproducibility.

Ready to Deploy Your Models?

Launch production-grade AI with ease. Scale, secure and optimize your deployments today.

Deploy Now